|

One of my favorite ideas in all of mathematics is to study the topology of a space by studying functions on the space. This is the underlying idea of Morse theory, which I hope to learn more about. A huge set of examples of this idea that I am more familiar with comes from complex analysis.

One may observe that in the examples that I have given, the functions we are studying to probe the topology possess some non-topological properties. For instance, holomorphic functions famously have some extremely strong properties most of which are not topological in nature at all. The same goes for harmonic functions, which share many properties with holomorphic functions. In general, differentiability is not a property of a function that interacts much at all with the domain topology. So it is natural to wonder if we can study the topology of a space by studying functions that obey no assumption other than the assumption that they interact somehow with the topology. It also seems reasonable that the topology should be uniquely determined by such functions. More precisely, let \(X\) be a topological space and let \(C(X)\) be the ring of real-valued continuous functions on \(X\). Question: Can one recover the topology on \(X\) given \(C(X)\)? In this blog post, we will show that the answer is in the affirmative. We will focus on the following special case: fix \(X\) to be a compact Hausdorff topological space. Consider the spectrum of the ring, \(C(X)\), which we denote \(\text{Spec }C(X)\). We can interpret the spectrum as a topological space by giving it the Zariski topology. Let \(\mathscr{M}\) be the set of maximal ideals of \(C(X)\). Since every maximal ideal is prime, \(\mathscr{M}\subseteq\text{Spec }C(X)\) and we can endow \(\mathscr{M}\) with the subspace topology. We sometimes refer to \(\mathscr{M}\) as the maximal spectrum of \(C(X)\). The incredible fact which we will prove is that \(X\) is homeomorphic to \(\mathscr{M}\). For every \(x\in X\) define \(I_x=\left\{f\in C(X)\colon f(x)=0\right\}\). Clearly, \(I_x\) is an ideal of \(C(X)\). What is less clear is that \(I_x\) is always a maximal ideal. We will show this in two different ways. In the first method, we will show that any ideal properly containing \(I_x\) is the full ring \(C(X)\). Fix \(x\in X\) and pick \(g\in C(X)\setminus I_x\). Since \(X\) is Hausdorff, \(\{x\}\) is closed, and since \(g\) is continuous, \(g^{-1}(\{0\})\) is closed (and disjoint with \(\{x\}\) since \(g\notin I_x\)). Recall that every compact Hausdorff space is normal (\(T_4\)), so by Urysohn's lemma, there exists a continuous function \(f\colon X\to\mathbb{R}\) such that \(f(x)=0\) but \(f(y)=1\) for all \(y\in g^{-1}(\{0\})\). Notice that \(f\in I_x\). Moreover, \(f\) and \(g\) have no common zeros by construction. Therefore, \(f^2+g^2\in\langle f,g\rangle\) is always positive, so the multiplicative inverse \(\frac{1}{f^2+g^2}\) exists in \(C(X)\). Since ideals are closed under multiplication from any element, \(\chi_X=(f^2+g^2)\cdot\frac{1}{f^2+g^2}\in\langle f,g\rangle\). So the ideal \(\langle f,g\rangle\) contains the identity element of the ring and thus \[C(X)=\langle f,g\rangle\subseteq\langle I_x,g\rangle\subseteq C(X).\] Hence, \(I_x\) is a maximal ideal as claimed. We have established that \(\{I_x\}_{x\in X}\subseteq\mathscr{M}\). It turns out that this is method is quite clumsy. A quicker way to establish that \(I_x\) is maximal is to notice that it is the kernel of the evaluation homomorphism \(C(X)\to\mathbb{R}\) that maps \(f\mapsto f(x)\). Since the homomorphism is clearly surjective, the first isomorphism theorem tells us that \(C(X)/I_x\cong\mathbb{R}\), which is a field. This immediately tells us that \(I_x\) is maximal. Hence, Urysohn's lemma is not (yet) required. The fact that \(\{I_x\}_{x\in X}\subseteq\mathscr{M}\) is purely algebraic. We want to establish the reverse inclusion as well. This is tantamount to showing that every maximal ideal of \(C(X)\) is of the form \(I_x\) for an appropriate choice of \(x\in X\). Let us study a "rogue" maximal ideal \(I\) that is not of the form \(I_x\) for any \(x\in X\). Since \(I\) is maximal and \(I_x\) is maximal for every \(x\in X\), the containment \(I\subseteq I_x\) would immediately imply \(I=I_x\). Hence, \(I\) is not contained in any ideal of the form \(I_x\). This means that for each \(x\in X\), there exists \(f_x\in I\) such that \(f_x(x)\neq0\). For each \(x\in X\), by the continuity of each \(f_x\) and the fact that \(f_x(x)\neq0\), there exists an open neighborhood \(U_x\) of \(x\) such that \(0\notin f_x(U_x)\). This gives us an open cover \(\{U_x\}_{x\in X}\) (notice that to form this open cover, we are invoking the axiom of choice). By compactness, we may extract a finite subcover \(\left\{U_{x_j}\right\}_{j=1}^{n}\). By construction, for each \(x\in X\), there exists at least one \(1\leq j\leq n\) such that \(f_{x_j}(x)\neq0\). So the functions \(f_{x_1},\dots,f_{x_n}\) have no common zero. This means that the function \(f_{x_1}^2+\dots+f_{x_n}^2\) is always positive and so \(\frac{1}{f_{x_1}^2+\dots+f_{x_n}^2}\) is a well-defined continuous function on \(X\). Since \(f_{x_1}^2+\dots+f_{x_n}^2\in I\), we have that \(\chi_X=(f_{x_1}^2+\dots+f_{x_n}^2)\cdot\frac{1}{f_{x_1}^2+\dots+f_{x_n}^2}\in I\). This is a contradiction: no maximal ideal is the unit ideal. Hence, no "rogue" maximal ideals exist. This establishes that \(\mathscr{M}=\{I_x\}_{x\in X}\). Notice the paragraph above uses the same sum of squares trick that we used when we clumsily showed that \(\{I_x\}_{x\in X}\subseteq\mathscr{M}\). In particular, we are using the general fact that the ideal generated by any finite collection of functions in \(C(X)\) that share no common zero is the unit ideal. This is what we have essentially proven in the previous paragraph. Now consider the well-defined map \(\varphi\colon X\to\mathscr{M}\) defined by \(\varphi(x)=I_x\). The above establishes that this map is a surjection. A more subtle point is injectivity. This is where we truly need Urysohn's lemma. Pick \(x,y\in X\) to be distinct points. Since compact Hausdorff spaces are normal, and \(\{x\}\) and \(\{y\}\) are disjoint closed sets, by Urysohn's lemma there exists \(f\in C(X)\) such that \(f(x)=0\) and \(f(y)=1\neq0\). This shows that \(I_x\neq I_y\), which establishes that \(\varphi\) is an injection and thus a bijection. We will establish that \(\varphi\) is in fact a homeomorphism. To do this, we will construct a basis for the topology of \(X\) and for the topology of \(\mathscr{M}\), and show that \(\varphi\) induces a bijection between those bases. For each \(f\in C(X)\), define \[U_f=f^{-1}\left(\mathbb{R}\setminus\{0\}\right),\qquad \tilde{U}_f=\left\{I\in\mathscr{M}\colon f\notin I\right\}.\] We claim that \(\{U_f\}_{f\in C(X)}\) and \(\{\tilde{U}_f\}_{f\in C(X)}\) form bases for the topologies on \(X\) and \(\mathscr{M}\), respectively. To check this, we will use the following standard result from point-set topology. A collection of open subsets \(\mathscr{E}\) of a topological space is a basis for the topology if and only if

We continue to establish the claim that \(\{\tilde{U}_f\}_{f\in C(X)}\) forms a basis for the topology on \(\mathscr{M}\). This is easy with a little knowledge of the Zariski topology on the spectrum of a ring. Define \[X_f=\left\{I\in\text{Spec }C(X)\colon f\notin I\right\}.\] It is a standard fact that \(\{X_f\}_{f\in C(X)}\) forms a basis for the Zariski topology. It is also clear that since \(\tilde{U}_f=\mathscr{M}\cap X_f\) for every \(f\in C(X)\), we have that \(\{\tilde{U}_f\}_{f\in C(X)}\) forms a basis for the subspace topology on \(\mathscr{M}\). Finally, we will establish that for every \(f\in C(X)\), we have \(\varphi(U_f)=\tilde{U}_f\). But this can be done in a single line. \[\varphi(U_f)=\left\{I_x\in\mathscr{M}\colon f(x)\neq0\right\}=\left\{I\in\mathscr{M}\colon f\notin I\right\}=\tilde{U}_f.\] We conclude that \(\varphi\) is a homeomorphism. What is interesting is how we employed the assumptions that \(X\) is Hausdorff and compact. Urysohn's lemma was used in a crucial way to establish that \(\varphi\) is injective, and for this we needed that \(X\) is normal (which uses both assumptions). The compactness assumption was used by itself in the proof that \(\varphi\) is surjective (i.e., the proof of the fact that \(\mathscr{M}=\{I_x\}_{x\in X}\)). However, the astute reader may argue that by proving that \(\{U_f\}_{f\in C(X)}\) forms a basis for the topology on \(X\), we accomplished exactly what we wanted to: we found a way to reconstruct the topology of \(X\) given \(C(X)\). In particular, we used the elements of \(C(X)\) to construct a basis for the topology on \(X\). In doing this, we used no assumption on \(X\) at all; we did not use the assumptions that \(X\) is Hausdorff and compact. Indeed, this construction is valid for any topological space. The issue is that the construction relies heavily on an understanding of the individual continuous functions in \(C(X)\). Usually, it is very difficult to compute preimages of arbitrary continuous functions on \(X\). Hence, we would like a better, more direct way to characterize the topology on \(X\). Showing that \(X\) is homeomorphic to \(\mathscr{M}\) (at the expense of some assumptions) gives us a complete picture of the topology (not just a basis) and it relies more on the ring structure of \(C(X)\) than the actual behaviors of the functions in \(C(X)\). From a theoretical point of view, this is a "nicer" characterization of the topology. It is an entirely algebraic characterization. So while it is true that \(C(X)\) always uniquely determines the topology on \(X\), there is an especially nice algebraic way to represent this topology in the case that \(X\) is compact and Hausdorff. This begs the question: what goes wrong with our algebraic characterization when we remove either the assumption of compactness or of being Hausdorff? Since the Hausdorff assumption is a separation axiom, it is fairly intuitive why things may go wrong if it is removed. What is more interesting is if we remove compactness. Let us study what happens when we remove the compactness assumption from a topological subspace \(X\subseteq\mathbb{R}\). By the Heine-Borel theorem, compactness in this context is equivalent to being closed and bounded, so let us separately remove the assumption of being closed and the assumption of being bounded to see what goes wrong in both cases. First, suppose \(X=(0,1)\). This is a set that is bounded but not closed. Let \(J=\left\{f\in C(X)\colon\lim_{y\to 1^-}{f(y)}=0\right\}\). It is easy to check that \(J\) is an ideal. However, it is easy to see that \(J\) is not contained in \(I_x\) for any \(x\in X\) because the function \(g(y)=y-y^2\) is in \(J\) but not in any \(I_x\) since \(g\) is positive on \(X\). Therefore, the maximal ideal containing \(J\) is none of the \(I_x\). So in this case, the inclusion \(\{I_x\}_{x\in X}\subseteq\mathscr{M}\) is strict. Now, suppose that \(X=[0,\infty)\). This is a set that is closed but not bounded. In this case, let \(J=\left\{f\in C(X)\colon\lim_{y\to\infty}{f(y)}=0\right\}\). Once again, this is an ideal. Moreover, the function \(g(y)=e^{-y}\) is in \(J\) but none of the \(I_x\), since \(g\) is positive on \(X\). So in this case as well, the inclusion \(\{I_x\}_{x\in X}\subseteq\mathscr{M}\) is strict. There is one last interesting note. Recall that when we formed an open cover in the argument, we remarked that we were invoking the axiom of choice. This was used to establish that \(\mathscr{M}=\{I_x\}_{x\in X}\). It turns out that that equality can be proven without the axiom of choice using only the assumptions that \(X\) is a complete, totally bounded metric space. See here.

0 Comments

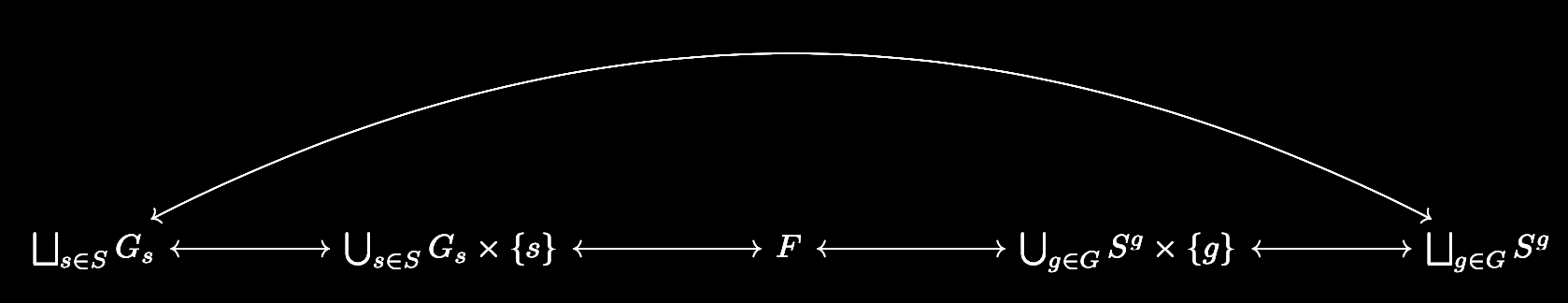

Recently, I have been thinking and learning a lot about algebraic geometry. I decided to write about the Zariski topology in my final project for my introductory algebraic geometry class. You can find the paper here.

I mainly discuss some of the basic topological properties of the Zariski topology on affine space. These are all elementary properties of the Zariski topology, but it is difficult to find a source anywhere actually listing these properties with proof, so I found it to still be instructive to think about the proofs. One remarkable thing is that one can use the Zariski topology to prove the Cayley-Hamilton theorem, which is something I wrote about. I essentially filled in the details from here. The proof essentially shows that the collection of operators that have distinct eigenvalues is dense with respect to the Zariski topology. Since affine space is compact with that topology, the result really tells us that the collection of operators with distinct eigenvalues is precompact. This is a result reminiscent of the Arzelà-Ascoli theorem, which led me to wonder if affine space is sequentially compact under the Zariski topology. My hunch is that this is false. I also briefly allude to the Zariski topology on the spectrum of a ring, but I don't really say much of substance about this. Over the summer, I plan on fleshing out this section and making precise the relationship between the topology on affine space and the topology on the spectrum of a ring. Based on what I've worked through in Atiyah-MacDonald, this relationship is not very easy to state. Group actions are a powerful tool for establishing some very concrete results. One classic application is the classification of the finite subgroups of \(\mathrm{SO}(3)\). We will explore group actions more by establishing Burnside's lemma and Cayley's theorem. The central result in the theory of group actions is the orbit-stabilizer theorem. For a group \(G\) acting on a set \(S\), and for any fixed \(s\in S\), the orbit-stabilizer theorem establishes the existence of a bijection between the set of cosets of the stabilizer of \(s\) in \(G\) and the orbit of \(s\). Combining this with the counting formula tells us that if \(G_s\) is the stabilizer of \(s\) and \(O_s\) is the orbit of \(s\), we must have \[|G|=|G_s||O_s|\] if \(G\) is finite. This fact enables us to prove the following result. Burnside's Lemma: Let \(G\) be a finite group acting on the finite set \(S\). For each \(s\in S\) let \(S_g=\{s\in S\colon gs=s\}\). If \(N\) is the number of orbits induced by the group action, then \[|G|\cdot N=\sum_{g\in G}{|S^g|}.\] Proof: Let us label the unique orbits as \(O_{s_1},\dots,O_{s_N}\). Since the orbits partition \(S\), we have \[\begin{split} \sum_{s\in S}{\frac{1}{|O_s|}}&=\sum_{j=1}^{N}{\sum_{s\in O_{s_j}}{\frac{1}{|O_s|}}}\\ &=\sum_{j=1}^{N}{1}\\ &=N. \end{split}\] Multiplying both sides by \(|G|\) gives us \[|G|\cdot N=\sum_{s\in S}{\frac{|G|}{|O_s|}}.\] Let \(G_s\) be the stabilizer of \(s\). By the counting formula, we can rewrite the summand to obtain \[|G|\cdot N=\sum_{s\in S}{|G_s|}.\] Consider every formula of the form \(gs=s\) that is true under the group action, where \(g\in G\) and \(s\in S\). Each such formula corresponds to exactly one element in \(G_s\) (namely \(g\)) and one element in \(S^g\) (namely \(s\)). Conversely, every element in \(G_s\times \{s\}\) corresponds to exactly one formula of the aforementioned form, and similarly every element in \(S^g\times \{g\}\) corresponds to exactly one formula of the aforementioned form. So the following diagram commutes: where \(F\) is the set of all formulas of the aforementioned form and all of the arrows are bijections. In particular, the bijection \(\bigsqcup_{s\in S}{G_s}\leftrightarrow\bigsqcup_{g\in G}{S^g}\) implies that

\[\sum_{s\in S}{|G_s|}=\sum_{g\in G}{|S^g|},\] and we are done. \(\square\) Burnside's lemma finds application in places where we wish to compute the number of orbits \(N\). For instance, consider the set \(S\) of \(\binom{8}{4}=70\) colorings of an octagon where four of the edges must be black and the other four must be white. Since it forms the symmetries of the octagon, the dihedral group \(D_8\) acts on this set, and we may consider orbits of this action to correspond to unique colorings. How many unique colorings are there? By Burnside's lemma, the answer is \(\frac{1}{16}\sum_{g\in D^8}{|S^g|}\). Notice by our work above, this is the same thing as \(\frac{1}{16}\sum_{s\in S}{|G^s|}\). Why do we bother using the former sum over the latter? More deeply, why did Burnside (actually, it wasn't him) care more about expressing \(N\) in terms of \(\sum_{g\in G}{|S^g|}\) rather than \(\sum_{s\in S}{|G_s|}\)? Our example hints at the answer. \(S^g\) represents the subset of colorings that remain fixed by the single symmetry \(g\). On the other hand, \(G_s\) represents the subgroup of symmetries that remain fixed by the single coloring \(s\). A little bit of thought is sufficient to see that \(S^g\) is in general a much simpler object to understand than \(G_s\). This holds in general: if the group acting on the set has a very complicated structure, it is very difficult to study the subgroup of it that fixes a single element of the set that it acts on. On the other hand, sets do not have any structure—they only have elements. By holding a group element fixed, the task becomes a matter of checking which elements in the set are fixed by that group element. This is a much easier task as it avoids the trouble of dealing with a potentially complicated group structure. In our case, this is to say that it is much easier to find the set of colorings that remain fixed under a given symmetry of the octagon than it is to find the set of symmetries that fix a given coloring of the octagon. We can push group actions even further. Let \(G\) be a group. Let \(S\) be a set, and let \(\mathrm{Perm}\ S\) denote its permutations. Note that in the category of sets, the morphisms are just functions, so \(\mathrm{Perm}\ S\) is really just the automorphism group of \(S\). In particular, it has a group structure, and there may exist a homomorphism \(\varphi\colon G\to\mathrm{Perm}\ S\). Such a homomorphism is called a permutation representation of \(G\) (with respect to \(S\)). It is not hard to see that the set of group actions of \(G\) on \(S\) has a bijective correspondence with the set of permutation representations of \(G\) with respect to \(S\). For example, let \(A\) be a group action. Then it can be checked that the map \(\varphi_A\colon G\to S_n\) which sends \(g\in G\) to the automorphism on \(S\) which is \(A\)-multiplication by \(g\) is indeed a homomorphism. Conversely, it may be checked that any permutation representation defines an action of \(G\) on \(S\). This natural correspondence gives us a natural way to identify group actions. If the permutation representation corresponding to a group action is injective, we say that the group action is faithful. Faithfulness is of course equivalent to the kernel of the corresponding permutation representation being trivial. The identity element of \(\mathrm{Perm}\ S\) is of course the identity map from \(S\) to itself. So for the kernel to be trivial, we wish left-multiplication by \(g\) to emulate the identity map on \(S\) precisely when \(g\) is the identity element of \(G\). We are now ready to prove a result that seems very deep to me at least. Cayley's Theorem: Every finite group of order \(n\) is isomorphic to a subgroup of the symmetric group \(S_n\). Proof: Let \(G\) be a finite group. Consider the group action of \(G\) on itself that maps \((g,x)\mapsto gx\). There exists a permutation representation of \(G\) with respect to itself, namely the homomorphism \(\varphi\colon G\to\mathrm{Perm}\ G\) that maps \(g\) to the automorphism on \(G\) that is left-multiplication by \(g\). Of course, the only element \(g\in G\) such that \(gx=x\) for every \(x\in G\) is the identity, so this group action is faithful. In particular, \(\varphi\) is an injective homomorphism, so \(G\) is isomorphic to \(\varphi(G)\). But of course, \(\mathrm{Perm}\ G\) is isomorphic to \(S_n\) where \(n=|G|\). \(\square\) So in an abstract sense, the symmetric groups are complicated enough that they encode every other possible finite group. This is an interesting result. However, it turns out that the idea of a group acting on itself is what really matters here, and it is that concept which is eventually used to obtain the Sylow theorems. |

Categories

All

Archives

July 2023

|

RSS Feed

RSS Feed