|

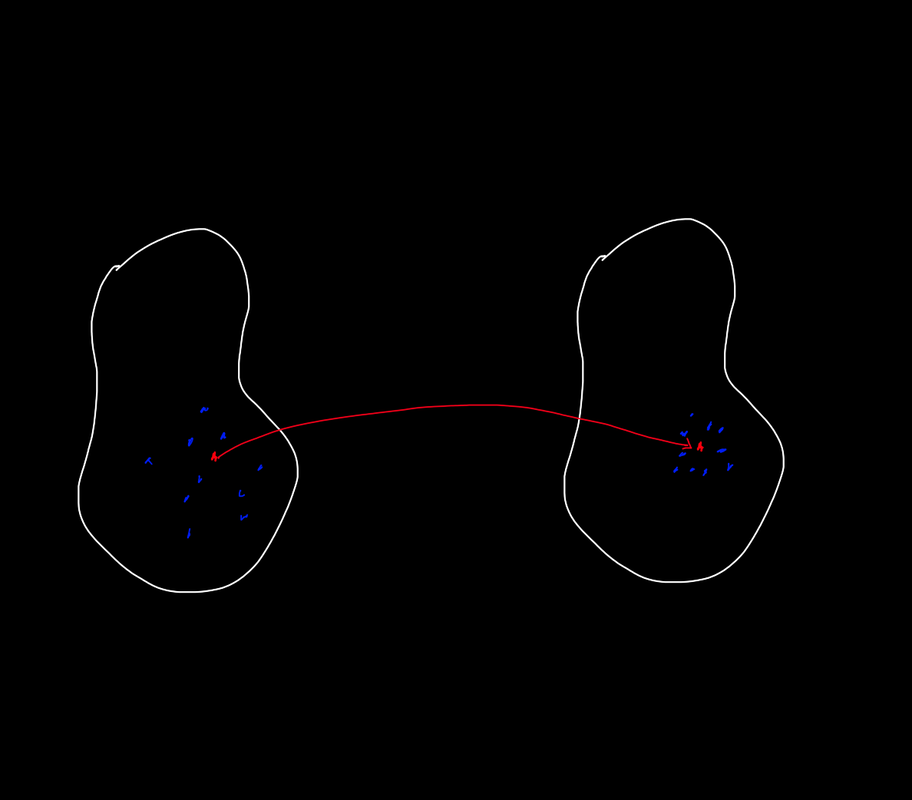

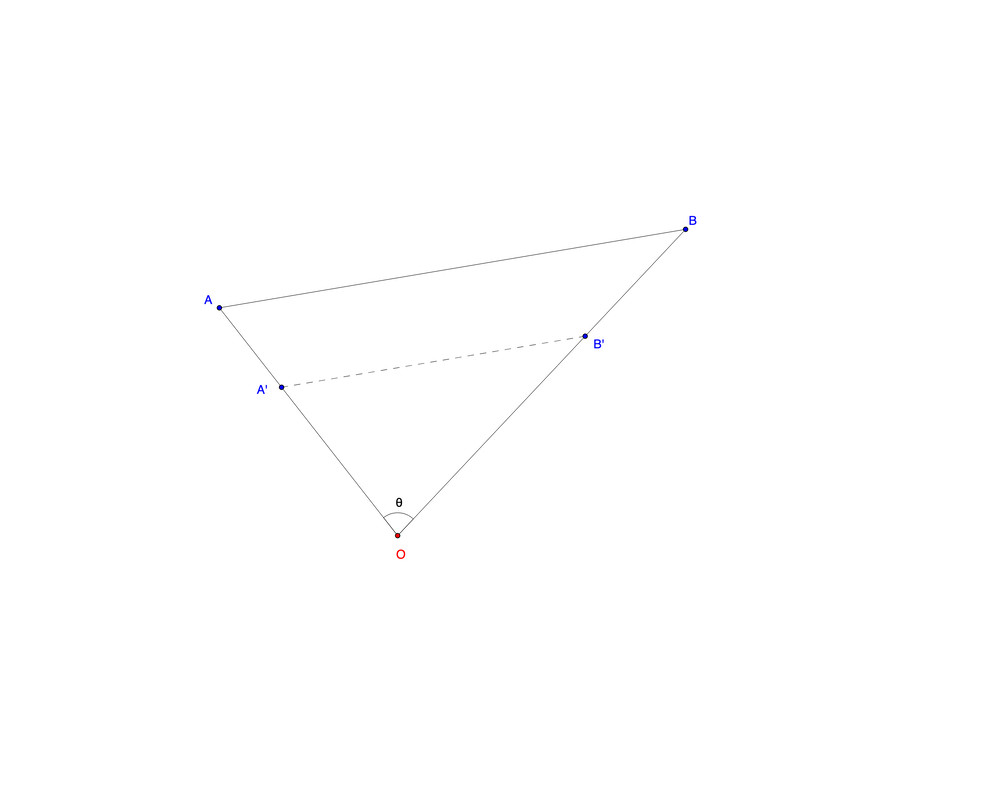

This last week in MATH 31BH, I learned of the proof of the inverse function theorem. The theorem states that if we have a function \(f\colon U\to\mathbb{R}^n\) where \(U\) is an open subset of \(\mathbb{R}^n\) and \([Df(\vec{x_0})]\) is invertible, then \(f\) is locally invertible at \(\vec{x_0}\). Honestly, this result isn't particularly interesting to me. It is too natural and intuitive. It would be a lot more interesting if the result were the opposite of what it is. After all, the total derivative is the best local affine approximation of a function. It isn't very extraordinary that a function preserves the invertibility of its approximation. However, the proof of the inverse function theorem requires what is known as the contraction mapping theorem (also called the Banach fixed-point theorem), which is a central result in analysis. This is a lot more interesting as it almost makes sense if you try to visualize it. Here is the theorem. Theorem (Contraction Mapping Theorem): Let \(f\colon\overline{U}\to\overline{U}\) be continuous. Suppose that \(\exists c\in(0,1)\) such that \[|f(\vec{x})-f(\vec{y})|<c|\vec{x}-\vec{y}|\] \(\forall\vec{x},\vec{y}\in U\). Then, \(\vec{x}\) such that \(f(\vec{x})=\vec{x}\) (a fixed point). Ah! This makes great sense. If you squish some points together, it would seem that some point must not move. Behold my great visualization. This is an example of a contraction mapping. Shown is a fixed point (in red) and some points in a neighborhood of it (in blue). Perhaps this visualization reminds you of something. Indeed, a homothety on the plane is an example (in particular, a special case) of a contraction mapping on \(\mathbb{R}^2\) to \(\mathbb{R}^2\)! How so? This is a homothety with center \(O\).

Consider two arbitrary points \(A\) and \(B\) in \(\mathbb{R}^2\), along with an arbitrary center \(O\). By the law of cosines, \[d(A,B)^2=d(A,O)^2+d(B,O)^2-2d(A,O)d(B,O)\cos{\theta},\] where \(\theta=\angle AOB\). Now take a homothety about \(O\) with scale factor \(0<k<1\). The operation takes every point in the plane and multiplies its distance to \(O\) by \(k\). Denote the image of \(P\) as \(h(P)\). Since homotheties preserve angles, we have again by the law of cosines, \[\begin{split} d(h(A),h(B))^2&=d(h(A),h(O))^2+d(h(B),h(O))^2-2d(h(A),h(O))d(h(B),h(O))\cos{\theta}\\ &=k^2[d(A,O)^2+d(B,O)^2-2d(A,O)d(B,O)\cos{\theta}]\\ &=k^2d(A,B)^2, \end{split}\] so \(d(h(A),h(B))=kd(A,B)\). Hence, we can choose \(c=k+\frac{1-k}{2}\) to satisfy the definition of a contraction mapping. The beautiful thing about this special case is that it makes it viscerally clear what it is we are generalizing. The contraction mapping theorem implies that there is a fixed point for our homothety. Indeed, \(O\) is a fixed point! Everything gets "squished" towards \(O\). Obviously it is no surprise that \(O\) is a fixed point – this follows trivially from the definition of a homothety (\(0\cdot k=0\)). The contraction mapping theorem is a stronger statement which essentially says that a more general "squishing" function still has "centers" that don't move. I will relegate the proof of the contraction mapping theorem to an actual PDF post, but I figured I should give a little background and context here. The proof is the one covered at UC San Diego's MATH 31BH.

0 Comments

Here is a fun one.

1994 Wisconsin Mathematics Science & Engineering Talent Search PSET 3 Problem 2: Suppose that each of the three main diagonals \(\overline{AD}\), \(\overline{BE}\), and \(\overline{CF}\) divide the hexagon \(ABCDEF\) into two regions of equal area. Prove that the three diagonals meet at a common point. Solution: Interesting. First, I drew the proper configuration. Where the diagonals concurred at point \(P\) and each split the hexagon into regions of equal area. In this case, six triangles were formed. I let \(T_1=\triangle APB\), and likewise labelled the rest of the triangles, proceeding clockwise with the labeling. Writing our three area conditions in terms of the areas of the \(T_i\)'s, I obtained the system of equations \[\left\{ \begin{array}{ll} [T_1]+[T_2]+[T_3]=[T_4]+[T_5]+[T_6]\\ [T_2]+[T_3]+[T_4]=[T_1]+[T_5]+[T_6]\\ [T_1]+[T_2]+[T_6]=[T_3]+[T_4]+[T_5]. \end{array}\right.\] Wow. There is clearly a massive amount of symmetry in these equations. My instinct is to add them all together. \[3[T_2]+2[T_1]+2[T_3]+[T_4]+[T_6]=3[T_5]+2[T_6]+2[T_4]+[T_1]+[T_3].\] This rearranges to \[2[T_2]+([T_1]+[T_2]+[T_3])=2[T_5]+([T_4]+[T_5]+[T_6]).\] Aha! The sums in the parentheses are equivalent! So \([T_2]=[T_5]\). By symmetry, we must also have \([T_1]=[T_4]\) and \([T_3]=[T_6]\). These results can be obtained explicitly by flipping an equation in our original system before adding all of the equations together. Conveniently, the triangles with equal areas happen to have equal angles since they are formed by the same two diagonals in each instance. Since the area of a triangle is given by \([ABC]=\frac{1}{2}ab\sin{C}\), and the enclosed angles were the same between the pairs of triangles with equal area, the product of the lengths of the enclosing sides must also be the same. So I figured, let \(AP=a\), \(BP=b\), etc. Then, we must have \[\left\{ \begin{array}{ll} af=cd\\ de=ab\\ bc=ef. \end{array}\right.\] At this point, I couldn't really immediately see anything more insightful about the correct configuration. I wondered how to even prove concurrency. Perhaps, I could work with a configuration that was not correct and show that the configuration could not be correct. So then, suppose that the three diagonals were not concurrent. Then there exists a seventh triangle, \(T_7=\triangle RST\), such that \(R=\overline{AD}\cap\overline{BE}\), \(S=\overline{AD}\cap\overline{CF}\), and \(T=\overline{BE}\cap\overline{CF}\). In this case, let \(T_1=\triangle ASF\), \(T_2=\triangle ARB\), \(T_3=\triangle BTC\), \(T_4=\triangle CSD\), \(T_5=\triangle DRE\), and \(T_6=\triangle ETF\). Incredibly, when we write the area conditions out in this configuration, \([T_7]\) vanishes entirely! The resulting system is identical to what we obtained in the correct configuration, and once again we obtain \([T_1]=[T_4]\), \([T_2]=[T_5]\), and \([T_3]=[T_6]\). The manipulations required to obtain these equalities are slightly trickier, however, as we have to strategically subtract \([T_7]\) from both sides of our simplified sum of three equations. Anyway, we let \(RS=\alpha\), \(ST=\beta\), and \(RT=\gamma\). We define the length from a vertex \(V\) of the hexagon to a vertex of \(T_7\) to be the lowercase of \(V\). Then, turning our area conditions into length conditions using the product of side lengths, we obtain \[\left\{ \begin{array}{ll} af=(c+\beta)(d+\alpha)\\ de=(a+\alpha)(b+\gamma)\\ bc=(e+\gamma)(f+\beta). \end{array}\right.\] Again, I see massive symmetry. This time, my instinct is to multiply the three equations together: \[abcdef=(a+\alpha)(b+\gamma)(c+\beta)(d+\alpha)(e+\gamma)(f+\beta).\] Aha! The product on the RHS is \(\geq abcdef\) since every variable is a length, and thus nonnegative. The equality condition is thus only achieved when \(\alpha=\beta=\gamma=0\). In other words, \(T_7\) must be a degenerate triangle whose vertices, the pairwise intersections of the main diagonals, must coincide at the same point. \(\square\) Here are some problems from Multivariable and Vector Calculus by David Santos.

Varignon's Theorem: The quadrilateral formed by midpoints of sides of any quadrilateral is a parallelogram. Proof: We proceed by vectors. Let our arbitrary quadrilateral be \(ABCD\), and let \(W\) be the midpoint of \(\overline{AB}\), \(X\) be the midpoint of \(\overline{BC}\), etc. Observe that it suffices to show that \(\mathbf{ZW}+\mathbf{ZY}=\mathbf{ZX}\). We see that \(\mathbf{ZW}=\mathbf{ZA}+\mathbf{AW}\). Furthermore, \(\mathbf{ZY}=\mathbf{ZD}+\mathbf{DY}=-\mathbf{ZA}+\mathbf{DY}\). Adding these two equations yields: \[\mathbf{ZW}+\mathbf{ZY}=\mathbf{AW}+\mathbf{DY}\] We take a look at \(\mathbf{AW}\) and find \(\mathbf{AW}=\mathbf{WB}\) and \(\mathbf{WB}=\mathbf{WX}-\mathbf{BX}\), hence \(\mathbf{AW}=\mathbf{WX}-\mathbf{BX}\). In a similar manner we may show that \(\mathbf{DY}=\mathbf{YX}-\mathbf{CX}=\mathbf{YX}+\mathbf{BX}\). Therefore: \[\mathbf{AW}+\mathbf{DY}=\mathbf{YX}+\mathbf{WX}\] And so: \[\mathbf{ZW}+\mathbf{ZY}=\mathbf{YX}+\mathbf{WX}\] We're almost done. We add \(\mathbf{YX}+\mathbf{WX}\) to both sides to obtain: \[\mathbf{ZW}+\mathbf{WX}+\mathbf{ZY}+\mathbf{YX}=2\left(\mathbf{YX}+\mathbf{WX}\right)\] This simplifies to: \[2\mathbf{ZX}=2\left(\mathbf{YX}+\mathbf{WX}\right)=2\left(\mathbf{ZW}+\mathbf{ZY}\right)\] And dividing by \(2\), we obtain the desired result. \(\square\) Problem: Let \(X\), \(Y\), and \(Z\) be points on the plane with \(X\neq Y\). Demonstrate that the point \(A\) belongs to \(\overleftrightarrow{XY}\) if and only if there exists scalars \(\alpha,\beta\) with \(\alpha+\beta=1\) such that: \[\mathbf{ZA}=\alpha\mathbf{ZX}+\beta\mathbf{ZY}\] Solution: We define \(\overleftrightarrow{XY}\) to be the standard horizontal axis. Then, the component of any vector from \(Z\) to \(\overleftrightarrow{XY}\) orthogonal to \(\overleftrightarrow{XY}\) must be a constant. Hence we may write: \[\mathbf{ZX}=\left<a,k\right>\] \[\mathbf{ZY}=\left<b,k\right>\] Suppose \(\exists\alpha,\beta\) such that \(\mathbf{ZA}=\alpha\mathbf{ZX}+\beta\mathbf{ZY}\) and \(\alpha+\beta=1\). Then it follows that we may write: \[\mathbf{ZA}=\left<\alpha a+\beta b,(\alpha+\beta)k\right>\] But since \(\alpha+\beta=1\), this becomes: \[\mathbf{ZA}=\left<\alpha a+\beta b,k\right>\] Since the component orthogonal to \(\overleftrightarrow{XY}\) of \(\mathbf{ZA}\) is equal to the constant \(k\), \(A\) must lie on \(\overleftrightarrow{XY}\). The converse of the above is also true. Suppose that \(A\) does lie on \(\overleftrightarrow{XY}\). Then: \[\mathbf{ZA}=\left<c,k\right>\] It is possible to uniquely determine \(\alpha,\beta\) that satisfy: \[\left\{\begin{array}{ll}\alpha a+\beta b=c\\\ \alpha+\beta=1\\\end{array}\right.\] By solving the system of equations above. A unique solution exists because we are given \(X\neq Y\) and thus \(a\neq b\), so the system is independent. Those values of \(\alpha\) and \(\beta\) permit the relation: \[\mathbf{ZA}=\alpha\mathbf{ZX}+\beta\mathbf{ZY}\] Hence, \(A\) lies on \(\overleftrightarrow{XY}\) iff \(\alpha\) and \(\beta\) exist as described. \(\square\) |

Categories

All

Archives

July 2023

|

RSS Feed

RSS Feed